- Home

- / David Platt

- / What does "Radical: Taking Back Your Faith from the American Dream" critically examine?

What does "Radical: Taking Back Your Faith from the American Dream" critically examine?

American education

American pop culture

American Christianity

American politics

Answer

The book "Radical: Taking Back Your Faith from the American Dream" critically examines American Christianity. The book argues that American Christianity has become too focused on material wealth and success, and that this has led to a loss of focus on the teachings of Jesus Christ. The book calls for a return to a more radical Christianity that is focused on social justice and helping those in need.

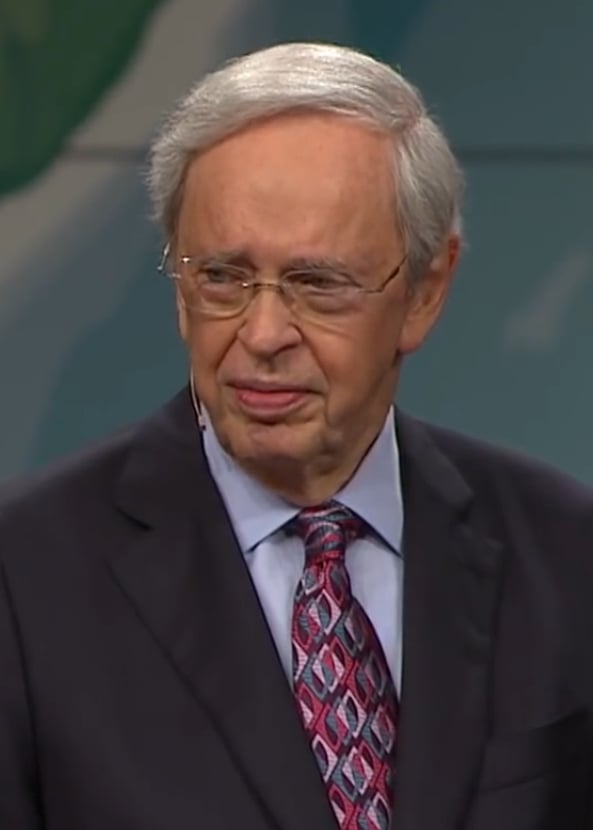

The Inspiring Journey of David Platt: A Quiz on the Life and Teachings of an Influential Pastor

More Questions

What does the sequel "Radical Together" encourage?

Which book by Platt gives a clarion call to die and live for Christ?

How long did David Platt serve at the Church at Brook Hills?

What significant change happened in Platt's career in 2014?

How old was David Platt when he became the youngest megachurch pastor in the US?

Related Quizzes

Subscribe Now!

Learn something

new everyday

Playing quizzes is free! We send 1 quiz every week to your inbox